What's in store for PHP performance?

As web application architectures increasingly move toward microservices (over HTTP or WebSockets) that communicate through the network, response time grows in importance. As an external API powered application is only as fast as the lowest common denominator, developers need to focus on response time (milliseconds for response), instead of just thoughput (request/second) - the norm for benchmarks.

PHP itself can be scaled horizontally quite easily due to it's shared-nothing architecture, which allows you to just add more nodes to deliver ever higher thoughput. Optimising for minimum response time is not so easy as CPUs simply don't get exponentially faster these days.

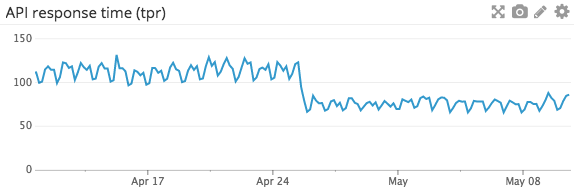

PHP 7 improves response times significantly, as the recent example graph from Dailymotion's PHP 7 upgrade shows:

In addition there are methods developers can use intermediary technologies such as a dumb data pump written in PHP, JavaScript, Go or something else in front of a complex application to access a dumbed-down data source for faster response times.

Async just means less waiting (for nothing)

PHP 7.0 is hardly the end of the road for PHP developers when it comes to seeking lower response times. A quick win is simply to do less waiting at processing time.

Asynchronous patterns have been popularised for backend developers by Node.js and Go's Goroutines. PHP is by default synchronous, which means it's easy to reason about as code executes in a predictable manner, from to top bottom. This means it has to wait for each individual sequential task to complete.

Asynchronous code on the other hand can continue to execute, even though some of the tasks are still undone. This leads to more difficult programming, but also a net improvement in performance as slow tasks can essentially be handled in parallel. In practise these are disk reads or database queries.

JavaScript is the poster child of async programming and it has multiple different tools for it; callbacks, promises, generators... but in 2017, the ECMAscript standard will receive the Async/Await keywords that allow you to write asynchronous code that looks like synchronous code. This is fast becoming the paradigm for JavaScript devs to write async code, instead of hard-to-reason-about generators that are more suitable for lower level tasks.

JavaScript developers can already use TypeScript or other tools to write Async/Await code with shims of sorts. PHP can be used for writing asynchronous code today, but the limiting factor is that many of the popular extensions like MySQL are not capable.

While PHP might get some day, Facebook's PHP fork Hacklang already has a robust Async/Await implementation, that might make it's way to PHP in some way or another. At least some of the minds behind Hacklang's implementation are toying with the idea:

@fredemmott Wouldn't hurt, but in truth I should suck it up and invent async for PHP.

— SaraMG (@SaraMG) September 16, 2016

The momentum is such that eventually PHP will get native asynchronous capabilities, and there is already an async interop group working on a set of shared standards. But it will still take quite a bit of time for the whole ecosystem to catch up.

In the meanwhile you can use some of the approaches that allow you to go async in your Symfony controllers, where applicable. Async isn't all in / all out.

PHP application servers to cut down overhead

While asynchronousity will undoubtedly unlock the biggest yields for web applications, that by nature mostly read and write data, there are other places where the overhead of running PHP can be lowered.

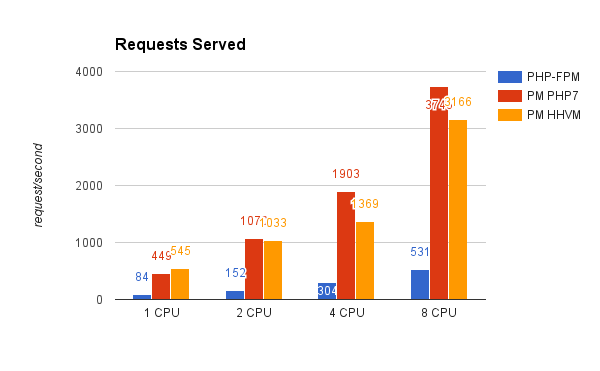

Application servers written in PHP have been around for quite a few years, and some are even running them in production. In practise this approach means that you will run a long-running PHP process that serves multiple requests. This offers quite a bit of potential for certain types of applications as this benchmark shows:

Application servers written in PHP can skip a lot of the overhead done when bootstrapping, but they're not without their issues. Your PHP application needs to be capable to run in this way and popular implementations like PHP-PM and PHPFastCGI are not yet robust enough for mainstream use.

Future improvements to PHP itself

PHP 7.0 was a leap in performance that came with very easy adoption. Simply verify compatibility with the version and upgrade your server environment. Speeding up many older architecture apps like WordPress and Mediawiki by a factor of two is a testament to backwards compatibility.

In 7.1, the language runtime will continue to make modest improvements, but bigger gains will have to wait. One of these opportunities for a bigger improvement is the JIT implementation that is now bound for PHP 8.0, as announced by Dmitry Stogov:

I'm glad to say that we have started a new JIT for PHP project and hope to deliver some useful results for the next PHP version (probably 8.0).

We are very early in the process and for now there isn't any real performance improvement yet. So far we spent just 2 weeks mainly working on JIT infrastructure for x86/x86_64 Linux (machine code generation, disassembling, debugging, profiling, etc), and we especially made the JIT code-generator as minimal and simple as possible. The current state, is going to be used as a starting point for research of different JIT approaches and their usability for PHP.

- http://news.php.net/php.internals/95531

This is not the first time the PHP development team has dabbled with JIT. Initially PHP 7.0 was supposed to ship with one, but after failing to achieve notable results outside of synthetic benchmarks they decided to optimise other parts. In hindsight, rightfully so!

There are other ideas out there as well. Many contemporary PHP applications follow a very predictable bootstrapping sequence of a single point. In his post on the PHP internals Michael Morris suggests that these could be cached to reduce overhead:

These apps then go through the process of initializing themselves and

sometimes there are thousands of lines of code that get parsed through

before the app is in ready state, the router finally loaded and a decision

is made concerning the user's request.

- http://news.php.net/php.internals/95653

Another idea on the Internals list is from Paul Jones, who suggests that the language should have native request and response objects:

From time to time we've all heard the complaint that PHP has no built-in request object to represent the execution environment. Userland ends up writing these themselves, and those are usually tied to a specific library collection or framework. The same is true for a response object, to handle the output going back to the web client. I've written them myself more than once, as have others here

- http://news.php.net/php.internals/96156

For example, the request and response objects are now widely used in Symfony as well as other projects. While they make application architectures more elegant, they do add overhead as an additional abstraction layer. This is why, for example, Drupal 8 is considerably slower than Drupal 7 in raw throughput. Abstractions come at a price.

In addition to the improvements mentioned above, there is always a chance that some off-beat approach like running PHP on .NET Core, will mature enough to become a valid alternative for HHVM and the official PHP runtime. With Docker maturing and containerization becoming mainstream, running an exotic runtime in itself will be a non-issue soon enough: